Words into feeling

This is the start of our story of the practicalities of adaptation and transformation: how we are going about rendering a text into a sequence of actions and experiences that create a new kind of meaning.

From the start the technology disappointed (see previous post), so we got cracking on the creative. Always a good plan. Working with very new technology it is so easy to sucked into the detail of the hardware/software: I2C serial modes or SDA lines, and loose sight of your initial objectives but also to forget to play. So, in our first couple of sessions we dived into the text. Led by Anthony and based on lectures he gives at the University, we analysed the themes and images of the story. As James said in a previous post, these were really interesting sessions, with the complexity and modernity he revealed compelling.

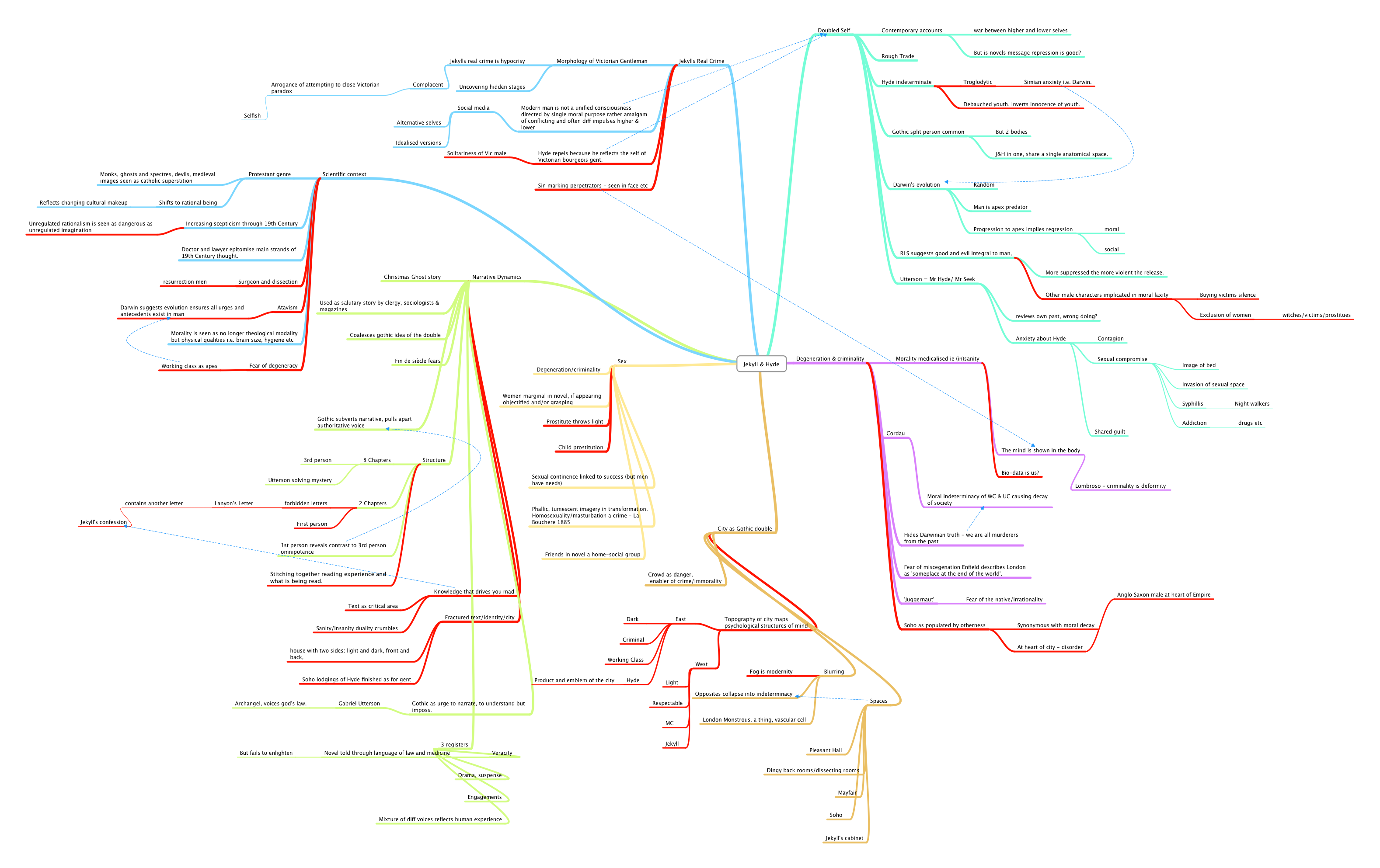

I like to work with mind maps. I think in patterns and the visual representation of structure, rather than encoded in a sequence of words, suits me. There is also a basic translation process where notes are made on the text, then these notes ordered spatially in the map, with connections and hierarchy encoded in the map. This helps focus; I highlight points that are particularly interesting in red and these that are connected directly become particular areas of focus. The map below is Anthony’s first lecture, an overview of the ideas, themes, histories and structures of the story.

Week two we began the analysis of what happens where. One of the key steps in adaptation, I think, is reduction. If we remove point of view, person, aspect and tense (i.e. removing authorial devices that characterise the written word), we are left with people undertaking a series of actions in places (see map below). Our goal is to discover the authorial devices that characterise the pervasive, interactive form and use them to bring new perspectives & readings to the text, to create new meanings.

The next step was to (re)combine the themes of the work, with the bare bones of the person/action/location armature. For example, if you could read the map above, you could see that doors are hugely symbolic, representing the inner and outer (of self, of the city) itself an representation of the duality that permeates the novel: east and west, upper and an lower class, human/animal and so on. The novel is the account of the blurring of this binary reality, but is also indeterminate itself with multiple viewpoints and different textual representations (letter, wills, witness statements, recollections). As such it is a very modern work, dismantling the facade of victorian moral certainty to uncover an unsettling ambiguity.

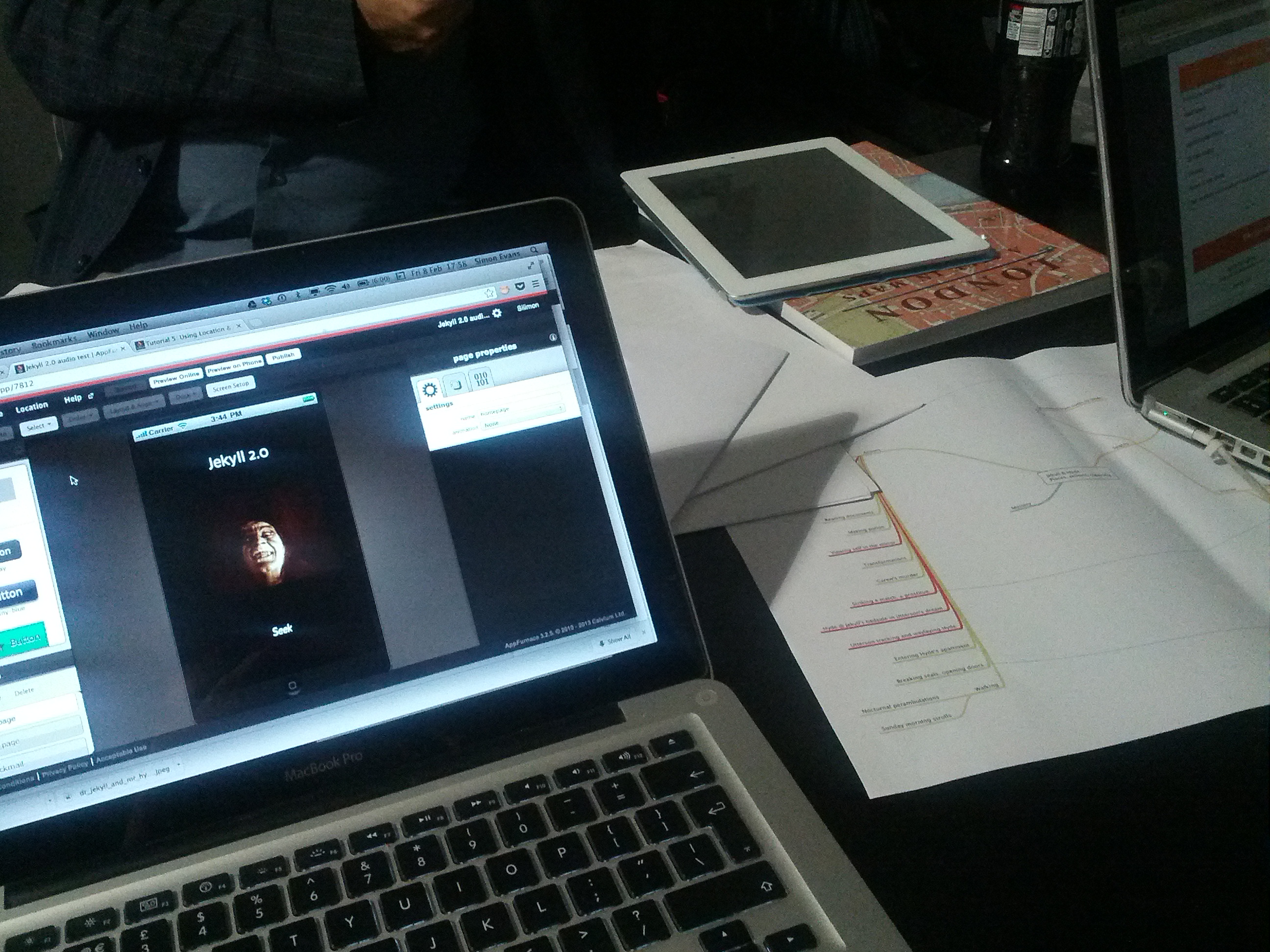

The rub is how do we render that beautiful, expressive complexity within an interactive piece, and one that is driven by participant’s bio-data? As explained the tech is proving problematic so extemporising somewhat I suggested last week we had a go at adapting key passages from the book for the spoken word, using AppFurnace as a platform. This online app builder tool will allow us to have these audio passages triggered by locations around Bristol that match those of the novel, on a mobile phone. My thinking was that undertaking this would create important learning: we’d begin to break the book down into sections we could use; experiment with sound as an interface in the finished piece; and begin to link participants action, moving around the city, with the story.

Appfurnace is a platform that enables non-developers to create apps for IOS and Android without coding skills (although there is an coding level should you want it). I’ve used it before for an app we did for Bristol’s Mshed a couple of years back. It was in prototype back then and had a fair few glitches. It’s pleasing to see that these have gone and that we could record and code a single chapter of the book in a couple of hours. It’s an excellent as a repaid prototyping tool, reminding me of Director back in the 90s.

Using Appfurnace, the goal is not to deliver a polished piece (we’ll be recording the parts ourselves after all and I’m no actor) but to use it as part of the creative process. It is flexible and quick enough to be like drawing, a way to create quick scenarios, to think experientially and to have that feedback into the design process without the tech getting in the way. Hopefully we’ll have something for people to try at the next workshop.