The God Article - Getting Started

Mevlevi Sufi’s playing Neys circa 1900’s (Image: Source unknown)

The Concept:

The God Article is a project about a simple flute, the Turkish ney. This is an ancient instrument found throughout Asia and suffused with much cultural significance. Although, the ney is a simple object, it is notoriously difficult to play and to teach.

The aim of this project is to develop ney replicas augmented with sensors to measure sound, breath and fingering. We hope to experiment with different ways to measure how a musician interacts with the instrument, and how visual feedback around this can be used as an aid in teaching and performance.

Turkish music is complex, and the project will also help transmit a classical tradition both in theory and practice, providing a prototype for the study and performance of flutes across cultures.

Turkish Ney (image: www.forumdas.net)

How it works:

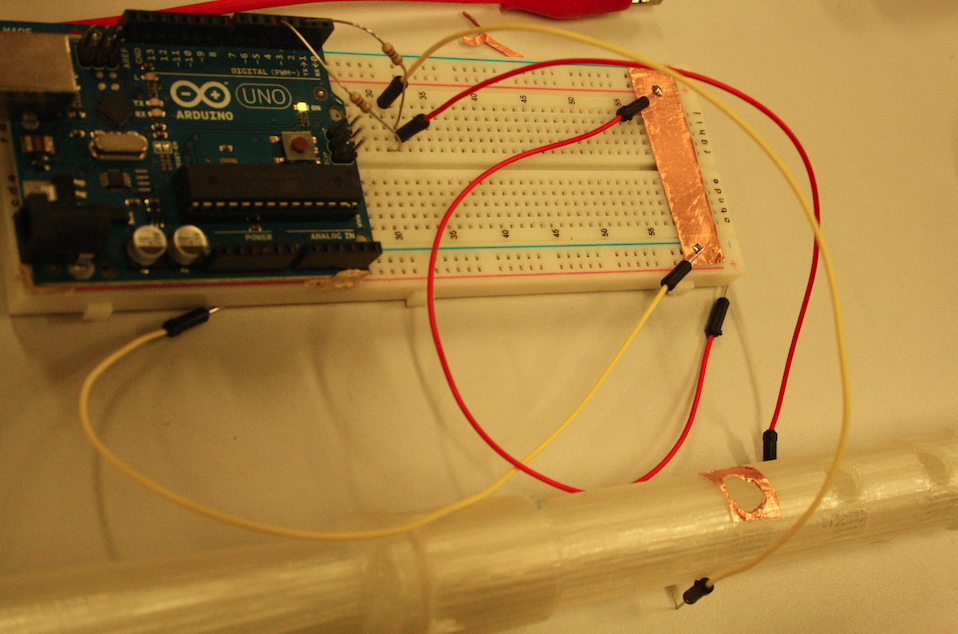

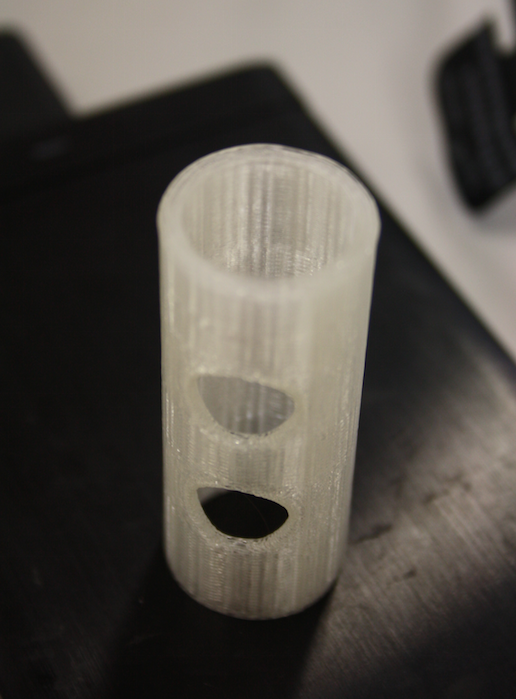

We aim to 3D print a replica ney, and place various sensors (for fingering, breath and sound) within it. Whilst we could use a real ney, 3D printing an accurate replica of a real instrument will hopefully allow us to place the electronic components as accurately as possible, without disrupting the internal shape of the instrument and keeping the sound as true as possible.

The sensors will be connected to an Arduino Board and send data signals (via openFrameworks) to a computer, where those signals will be processed and played back via a browser-based interface.

Progress:

After the initial workshops, the team has made some good progress.

We have successfully digitally fabricated the mouthpiece (başpare) of the ney and are currently working on the rest of the instrument:

We’ve also have success with getting the touch-sensitive finger apertures working:

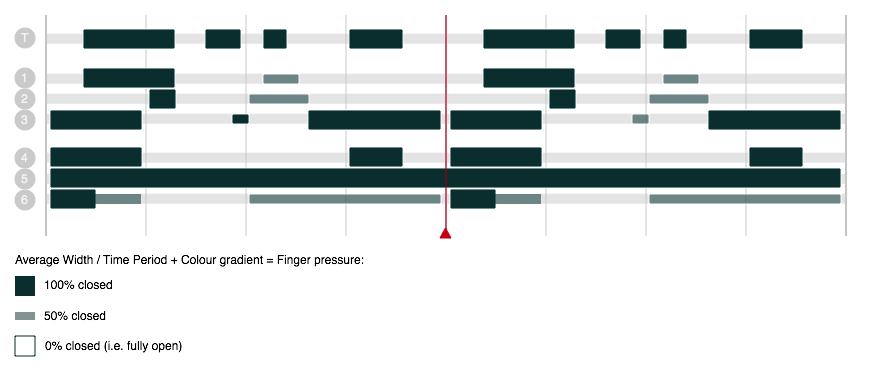

We identified a number of variables that we initial want to measure:

Breath (Across the mouthpiece, potentially also from the bottom of the instrument)

Fingering (Touch)

Tuning (Frequency)

Timbre (Overtones)

Tone (Noisiness)

Amplitude (Dynamics)

One question is how to represent all of these different types of data onto one interface. Ultimately, we want the data to prove useful, without becoming a distraction from playing or teaching, so the challenge is to make the feedback and interface simple and useable. We have started to sketch how the data could be represented and are experimenting with different types of interface for different users groups we have identified.

Displaying breath vs tone/noise statically (with a 'target' highlighted in red)

Audience/Market:

We think there are a range of initial audience groups, including:

A Beginner (e.g. breath and tuning data could be used to help beginners understand how to correctly produce notes within and across the different tonal ranges)

Relevant data: Breath (Top), Tuning (Frequency), Fingering (Touch), Amplitude (Dynamics)

A Professional (e.g. a teacher could use breath and fingering data, alongside audio, to help to explain how to correctly play a traditional tune to a student, and compare the student’s playing with their own)

Relevant data: Tuning (Frequency), Fingering (Touch), Amplitude (Dynamics), Timbre (Overtones)

A Scholar (e.g. a scholar could use the full range of performance data for research purposes, such as analysing and comparing regional differences in style)

Relevant data: Breath (Top, Bottom), Tuning (Frequency), Timbre (Overtones), Tone (Noisiness)

We’ve also identified some secondary audiences for The God Article:

A Scientist: Relevant Data: Fingering (Touch), Tuning (Frequency), Timbre (Overtones), Tone (Noisiness)

A Composer: Relevant Data: Breath (Top, Bottom), Tuning (Frequency), Timbre (Overtones), Tone (Noisiness)

Our questions moving forward:

We have identified quite a few(!) questions about where this is all going. Here are just a few of the wider ones:

How do we (ultimately) expect other people to get access to this system?

Are we developing a product, a platform or both?

What other (expected or unexpected) uses are there for this type of object/system?

Is one ultimate aim to repeat this with other instruments?

Can what we learn be re-used to produce lesson content etc?

Could the system be used in museums to allow visitors to play and play traditional instruments they would not normally be allowed to handle?

Could the system be used as a research tool, to feed into a virtual repository of knowledge (a ‘virtual museum of performance’)

Our immediate focus

In order to stay focused and not get bogged down in the wider questions, we have decided to concentrate on producing a working prototype 'digital' ney, which will send data to the computer to be visual displayed in the interface. We aim to test this prototype with a professional ney-player (neyzen), hopefully in early May, in order to learn more about what sort of feedback is useful in different contexts.

We’ll then use what we learn to feed into a second iteration of the prototype.

Posted by the team